Social scientists often collect primary data in the form of inviting human participants to the laboratory and asking them to make decisions or answer survey questions. Such research – called human subjects research – is overseen by ethics committees at universities to assure that researchers are protecting the rights and welfare of study participants.

One important ethical issue is the extent to which human subjects are deceived. A famous example of studies using deception is Migram’s studies of obedience from the 1960s in which participants were told to deliver strong electrical shocks to a confederate (i.e., actor for the researcher) in another room. The shocks were never administered, but the confederate in the other room acted as if they were. Today, deception in psychology is much less extreme, in large part due to critiques of the psychological distress that Milgram’s studies caused participants.

While psychologists and ethics committees grapple with what is and is not appropriate when it comes to deception, in economics it is almost a religion that deception is bad. This is generally due to a desire to maintain control: the concern is that if participants in a study don’t believe the researcher, their decisions will be unreliable. Reviewers of academic papers in economics are known to summarily reject publications in which deception of any sort is used. At the same time, reviewers, editors and authors often do not see eye-to-eye on what constitutes deception. For example, is it deceptive to tell a participant that there will be 10 questions in a study, but then actually ask 20 questions? And, is it deceptive to tell participants that an outcome is “random” when in fact the underlying probability of an outcome is 25 or 75%?

Together with my co-authors Gary Charness (UC-Santa Barbara) and Jeroen van De Ven (University of Amsterdam), we conducted a survey of economists and recent study participants to answer these questions and to understand what is and is not acceptable when it comes to deception in economics. About half of experimental economists worldwide (788) completed our survey. In addition, 126 former participants of research studies from three different universities also participated.

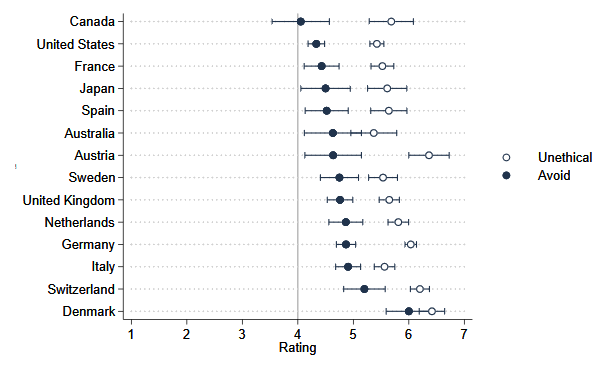

We found a large diversity in views about deception. Economists thought that the use of confederates (or bots) and strategically omitting relevant information was most deceptive of the scenarios we tested. Students, on the other hand, seemed more upset about deception when it required them to do more work in the study than they initially expected, and were less upset about deception involving confederates or omission of information. There were also heterogeneities by country, with (for example) North Americans expressing less negative views on deception than Europeans (see figure).

There is more uniformity on the importance of avoiding deception. Quite a few researchers regard minor deception as appropriate when no alternatives are available and the data are important. In this way, economists are similar to psychologists, who argue for permitting deception on the grounds of advancing science.

Perhaps most surprising was the fact that students appeared to be largely unaware of the existence of no-deception policies in economics labs. Less than 20 percent indicated that they knew about the policy in their lab. Despite this, most students said they would be likely to return to participate in future experiments if deception were used.

Our data suggest that despite the strong belief in economics that deception is bad, what constitutes deception in economics is less clear. Moreover, costs and benefits should be considered such that some deception might be appropriate when the topic is important and there is no other way to gather data. Importantly, if the purpose of a no deception policy is to maintain a sense of control, then labs around the world need to do a better job of educating participants of no-deception policies.

You must be logged in to post a comment.